You have not yet added any article to your bookmarks!

Join 10k+ people to get notified about new posts, news and tips.

Do not worry we don't spam!

Post by : Anis Farhan

Smartphones have dominated how we interact with digital assistants, messaging, maps, and media. But there are frustrations: we constantly pull them out, screen glare, interruptions, dependency on hand interaction. Smart glasses promise a different model: wear the device, glance, gesture, use voice, let AI do the rest.

Meta’s newest glasses mark a milestone: built-in display on the lens (so you don’t always have to look at a phone screen), a neural wristband to sense gestures (pinch, swipe, etc.), cameras, microphones, speakers, AI voice control. The idea is that many tasks — messages, captions, navigation, even translations — can be done more seamlessly, leaving the phone in pocket more often.

The new glasses aren’t just about novelty. They reflect a broader push in tech: AI + wearables + more natural input (voice, gesture, vision). The integration of display, gesture control, voice, and AI aim at reducing friction. Instead of opening apps, unlocking phone, tapping, then closing — smart glasses try to collapse that chain.

Here’s a look at what the latest model of smart glasses (Meta’s Ray-Ban Display) achieves, and where it still struggles.

What they're good at:

Display visuals in the lens: The newest Ray-Ban glasses include a screen in one lens for displaying messages, visual responses from AI, navigation prompts, captions, etc.

Gesture control via wristband: A Neural Band detects small hand gestures so you can scroll, select, change volume, etc., often without speaking.

Live captions & translations: Spoken words can appear as text in real time; translations also work live in many uses.

AI integration & app support: Messaging, video calls, and other familiar smartphone apps are supported so users can send/receive messages, view content, etc.

What remains challenging or limited:

Field of view & display brightness: Because display is only in one lens and with limited angle, viewing can feel restrictive. Bright sunlight still makes visibility hard.

Battery life: The glasses themselves last for a few hours (around six) on continuous use; the carrying case adds much more, but you’re still expected to recharge often. Gesture sensors and display consume power.

Reliability in real-world scenarios: Live demos have failed or glitched (e.g. display sleeping or gestures not registering) during product reveals. These highlight potential instability under stress or heavy usage.

Style, comfort, and aesthetics: Even though design has improved, weight, bulk, OTAs (over time) comfort, looks remain concerns. Also prescription compatibility and fitting remain less mature than phones.

Data, privacy, and security: Always-on cameras, audio input, display of messages in lens, etc., raise issues about who can see/hear what, how data is processed, what leaks might occur.

Smart glasses are making fast progress, but replacement of smartphones is not immediate. Here are key factors influencing whether glasses will reduce dependence, or possibly replace phones in certain use-cases.

Where smart glasses could reduce dependence:

Quick interactions: checking messages, notifications, captions, navigation prompts, voice assistant queries. These often involve short usage, where pulling out a phone feels cumbersome.

Hands-free tasks: driving, walking, commuting, cooking — when holding or accessing a phone is inconvenient or unsafe.

Accessibility: for users with visual impairment, hearing challenges, or mobility issues — features like live descriptions, captions, gesture inputs help.

Fitness & outdoor activities: models targeting athletes include fitness tracking, action cameras etc., letting users skip phone for some parts of workouts, hikes etc.

Where phones will continue to be needed:

Rich content consumption: long reading, video streaming, gaming, intensive media editing still favor larger screens.

Productivity tasks: typing, heavy document work, spreadsheets etc. Phones or laptops still offer more comfort & capability.

Battery-heavy tasks: camera-intensive video, continuous use of display & AR features consume power; phones are better built for long run times.

Ecosystem & app support: many apps are still phone-first; glasses are catching up but many use-cases aren’t yet optimized for them.

Beyond the commercial devices, there are academic/experimental innovations that are helping pave the path forward:

Eye-tracking systems that are low-power and non-invasive, aimed at reducing the need for external cameras while improving control and responsiveness. One such system can recognize eye movements with high accuracy using very little battery.

Gesture recognition systems that activate only when needed (using audio cues or event-triggers) to save power and improve battery life, while still delivering responsiveness.

Wearables that can support memory assistance: detecting contextual cues, helping recall routine tasks (e.g., reminding where you left your keys), based on a combination of sensors, audio, and AI processing.

These are helping address core limitations: energy use, natural input, usefulness in real daily settings.

Here are the hurdles that companies must overcome, and what users will expect, before smart glasses can replace phones for many.

Improved battery and power management: Longer battery life in both glasses and accessories. Power usage of displays, gesture sensors, always-on features needs to be lower.

More natural, reliable input mechanisms: Gesture / voice / eye-tracking must work under varied lighting, with background noise, with gloves, etc. Latency and misfires must reduce.

Better display optics: Seamless visuals, good brightness, minimal distortion, supporting varying vision (prescriptions, etc.), comfortable for long use.

Lightweight, stylish design: Glasses must look like regular glasses in many situations; weight and comfort must match eyeglasses standards, not be bulky hardware.

Strong offline capabilities / low latency: Many apps work only if connected. For wider adoption, features must gracefully work offline or with intermittent connectivity.

Privacy, security & data handling: Always-on sensors (camera, mic) raise privacy risk. Clear policies and protections needed — who owns data, how secure, what is processed on device vs cloud.

Affordability: Price premiums are high. For mass adoption, costs need to come down, supply chains improved, economies of scale, variants (sport, fashion, fitness) to drive volume.

Meta’s recent product reveals show several signs that this idea is moving from futuristic concept toward viable consumer product:

Integration of display + gesture + AI assistant: These are combined in one device rather than standalone experimental features.

Use of a wristband to enhance input options: helps overcome limitations of voice or touch alone.

Inclusion of features like real-time translation, captions, camera + video call sharing from glasses perspective.

Targeted variants: sport/fitness models, fashion models, etc., showing that manufacturers recognize different use-cases.

Price point indicates premium product, early adopter phase. But it sets benchmarks: expectations on design, features, usability.

Though there were glitches in live demos (display not activating, gesture control misfires), these are expected in early generations. The critical factor will be how stable it becomes once in many hands.

If current progress continues, here’s a plausible scenario for smart glasses over the near future:

More widespread models with display + AI: Several manufacturers (not just Meta) will begin releasing variants with integrated displays.

Price drops and more options: mid-tier and lower-cost versions will appear, especially for fitness / outdoors / focused use-cases.

Better battery and more efficient sensors: through better hardware + smarter software (turning off sensors when not needed etc.).

App ecosystem adaptation: apps redesigned for glanceable UIs, voice-first interactions, image/video sharing from glasses.

Regulatory & privacy frameworks likely evolve: laws & norms for privacy, public video recording, etc., to ensure responsible use.

It’s possible that for many users, “phone in pocket, glances at glasses” becomes a normal workflow for certain tasks (navigation, messaging, captions, reminders).

Smart glasses are not yet ready to fully replace smartphones in most people’s daily lives. But recent advances — built-in displays, gesture control, AI assistants, translation, camera usage — suggest we are on a fast track toward reducing dependence on phones. For tasks that require quick glance, hands-free interaction, or contextual awareness, smart glasses are becoming viable alternatives.

In the meantime, smartphones will still be needed for heavy content, long usage, and more complex interactions. But as designs improve, battery life increases, and input methods get more natural, smart glasses are likely to become a major supplement — maybe even a primary device for many tasks.

For anyone curious or interested, the coming 2-3 years will be decisive. Choose wearables that let you test features, track their improvements, and watch how your own daily interactions might shift. The future may still include phones, but smart glasses are staking claim to be much more than just accessory.

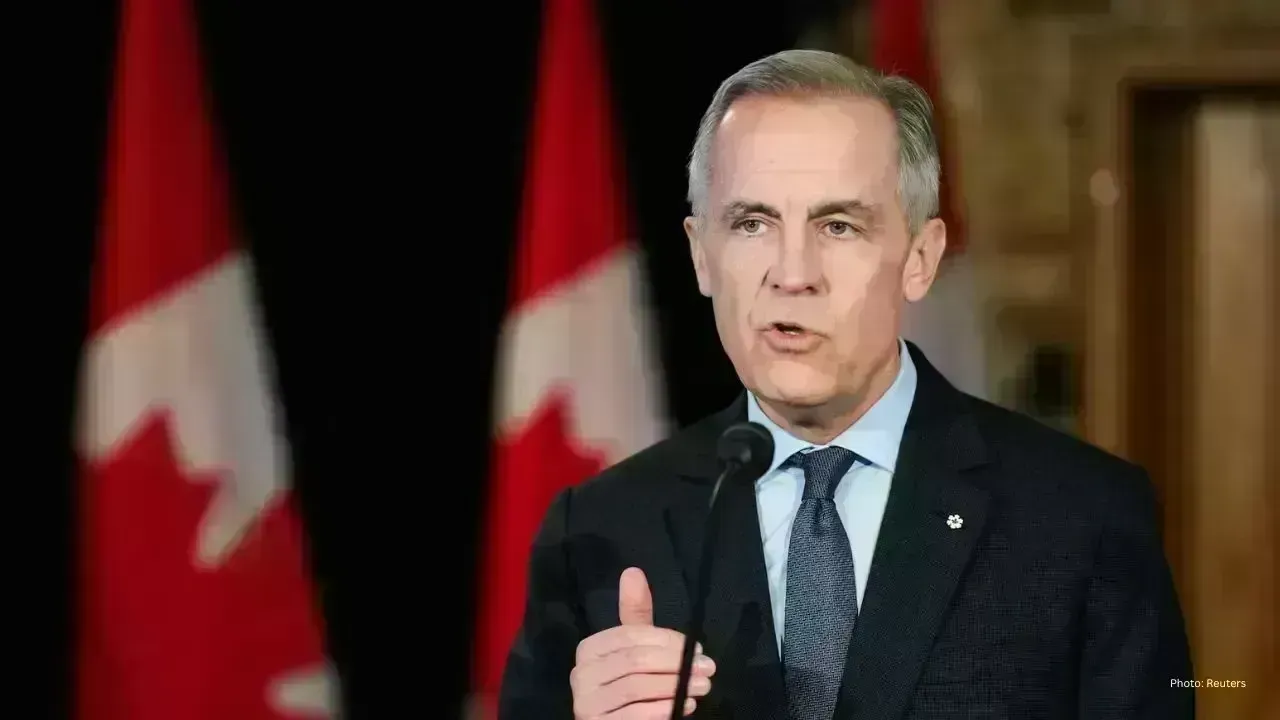

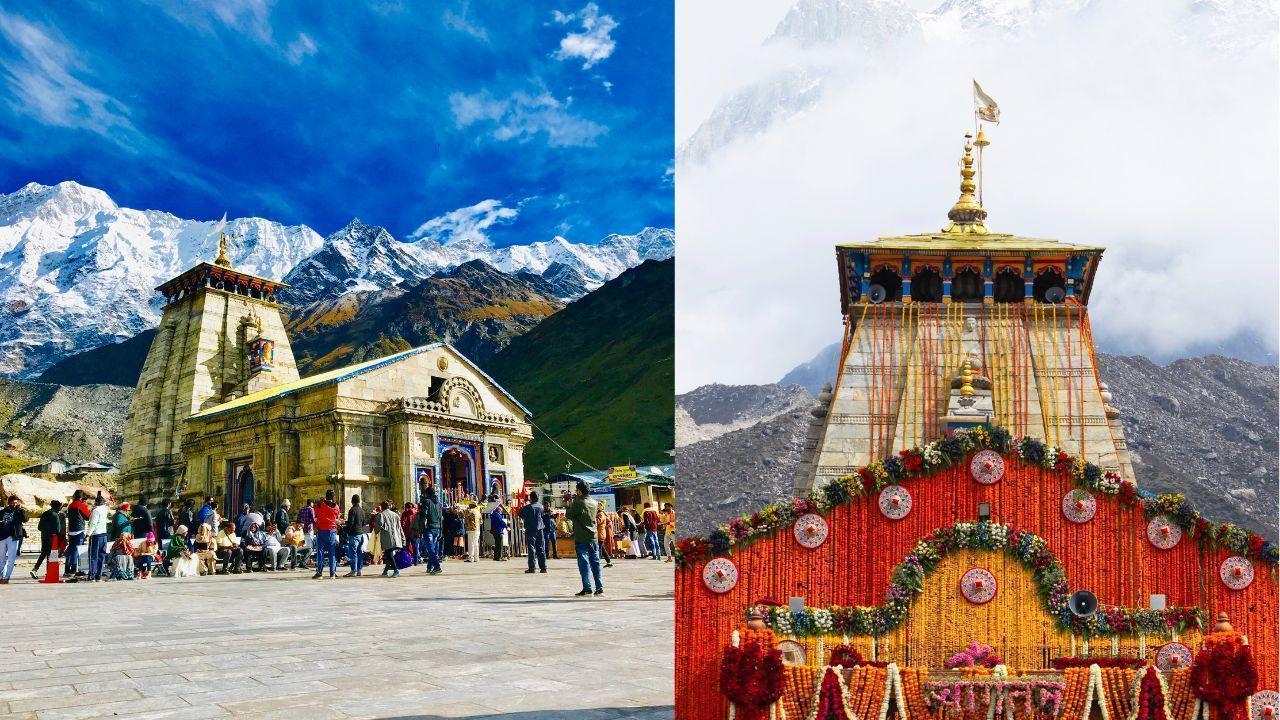

Kedarnath Temple Opens for Yatra 2026

Sacred shrine reopens after winter as Char Dham Yatra begins with rituals, chants, and thousands of

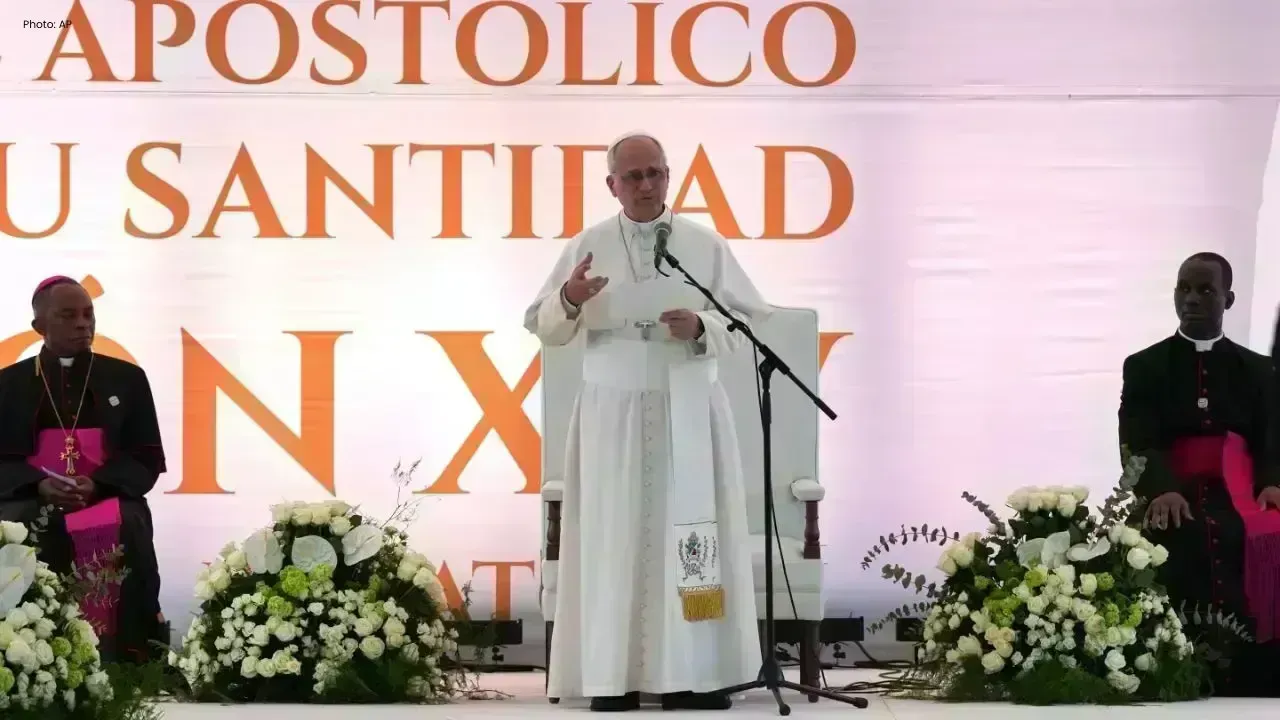

Pope Visit Puts Prison Abuses in Focus

Pope Leo XIV visits Equatorial Guinea prison highlighting rights concerns and migrant deportation is

Taiwan President Delays Africa Visit Move

Lai Ching-te postpones Eswatini trip after flight permits revoked, Taiwan accuses China of pressurin

Elevate Your Career: 7 Free Online Courses Available in 2026

Discover 7 free online courses to enhance your skills and career prospects without financial strain.

Vietnam Clarifies Local Env Inspection Powers

Authorities confirm commune level officials can inspect businesses for environmental compliance unde

Vietnam Issues Rules on Tech Forensic Exams

New circular sets standards for forensic experts and regulates examination processes in science and